The Machine Learning and Media Informatics research group is a specialised research team within the Department of Computer Science.

We research general questions of Machine Intelligence and AI, in particular Deep Learning. In particular we are interested in creating inductive biases to increase the robustness and generalisation. We apply our models to media, with a focus on music and language. We are also interested in multi-modality and emotion recognition.

Music Informatics includes the study of computational models of music and sound analysis and generation, and music performance. For an overview presentation showcasing MIRG activities, click here.

Main Research Activities

- Inductive biases in deep learning. Making (deep) neural networks models that are more robust and generalise better.

- Multimodal emotion recognition. Combining deep learning on video, text, and audio to recognise the emotion from videos.

- Music information retrieval. Adaptive models of score, MIDI, and audio data, with the goal of genre classification, similarity, style and user modelling.

- Music signal analysis. Automatic music transcription, multi-pitch detection, onset detection, instrument identification.

- Computational musicology. Algorithms for music segmentation, representation, hierarchical structuring, and analysis.

- Music knowledge representation. Representation of music on multiple levels, logical structures for music, standardisation activities.

- Applications: exploration and exploitation of new technological approaches for applications such as music search and recommendation, as well as analysis and classification of biological and industrial sounds.

Some current and recent MIRG members at ISMIR 2018 in Paris:

(l.t.r.: Shahar Elisha, Srikanth Cherla, Daniel Wolff, Tillman Weyde, Andreas Jansson, Radha Kopparti, Reinier de Valk )

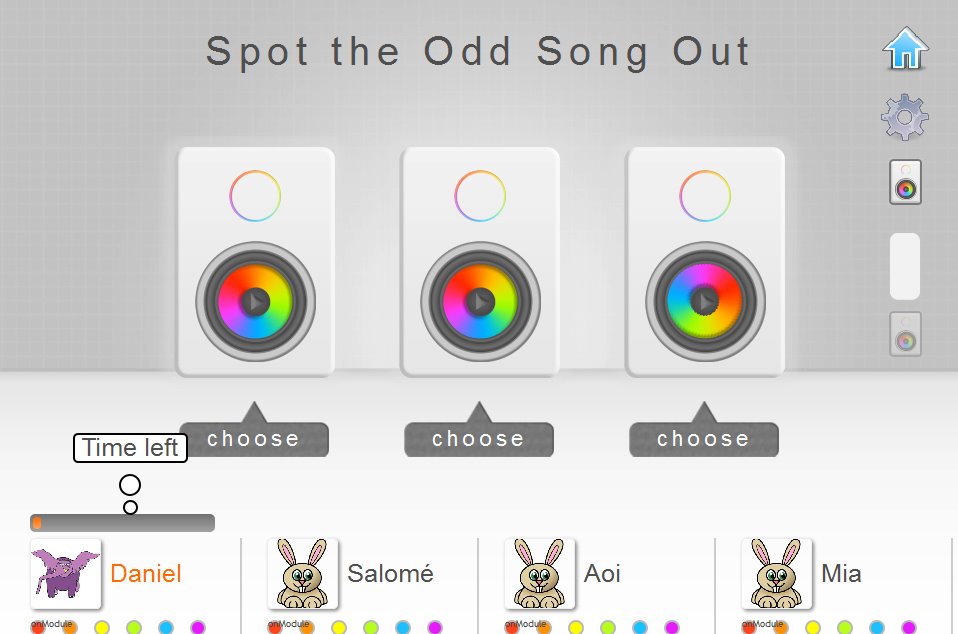

We are collecting a new music annotation dataset including similarity, tempo and rhythm data: Our multi-player music game “Spot the Odd Song Out” can be played here.

A presentation at thee Music Tech Fest in London: